Just be prepared that you have to lead and get consensus on how to solves those issues in a generic way. Like everyone in the community you are free to do it and start devlist discussion. Speaking ilof which - once you or anyone else who wishes to discuss it watches the talk, think about those issues and understands complexities involved, you are free to propose, discuss (on devlist) an AIP for that. I recommend anyone who wants to take part further in the discussion to watch that talk as it explains everything you need to know to understands complexities involved. This is PRECISELY about making Dags submittable via rest API. There area number of discussions on how to do what you want resulting in a number of ideas like DAG Fetcher or DAG Manifest and it involves changing the way and limiting how DAGs are being written and potentially annotated (and serialized including all the information necessary to not only know the DAG structure but also to execute it).Ī lot of the challenges and problems to solve have been very nicely described in the recent talk from the Airflew Summit 2022 where AirBnB explained how they have done something similar internally and what kind of limitations they had to introduce and how much they had to enforce internally to make it happen. Potentially loaded in dynamic way by your python code based on any factors Python DAG is much more than just a single python file - it is the byte code of the class but also potentially imported classes and even potentially files that are read by the Python code placed in the dag folder and any of the subfolders. So basic assumption here does not hold unfortunately because what your serialization idea is, it is different that the serialization we have in Airflow. The serialization only stores dag structure and configuration not python code. I agree to follow this project's Code of Conduct.No response Are you willing to submit a PR? I would also appreciate other ideas to possibly circumvent this limitation. The necessity of actual python files stored somewhere unnecessarily increases the complexity of each deployment.

The above approach works, but certainly things could be better. py files to a storage somewhere, where a running instance of Airflow can read the new DAGs. My context: I’m working on a project where I start with JSON files defining newly created DAGs, and then I see myself having to turn those into Python files (I use Jinja2 templates for that) and having to upload these newly created. Thanks for all the great work, I’ve been loving working with Airflow! Use case/motivation Is this something that might be considered for an upcoming version? Depending on your use case, you may want to store this key in an Environment Variable or secret management tool of choice.Given that all DAGs are serialized in the database ( reference), it feels like a POST request in the Airflow REST API for creating DAGs would be a very useful improvement! It could accept already serialized dags, for example. For more information on Workspace roles, refer to Roles and Permissions. Note: In order for a service account to have permission to push code to your Airflow Deployment, it must have either the Editor or Admin role. Give your service account a Name, User Role, and Category ( Optional). To create a service account using the Software UI: Create a service account using the Software UI If you just need to call the Airflow REST API once, you can create a temporary Authentication Token ( expires in 24 hours) on Astronomer in place of a long-lasting Service account. You can use the Software UI or the Astro CLI to create a Service account.

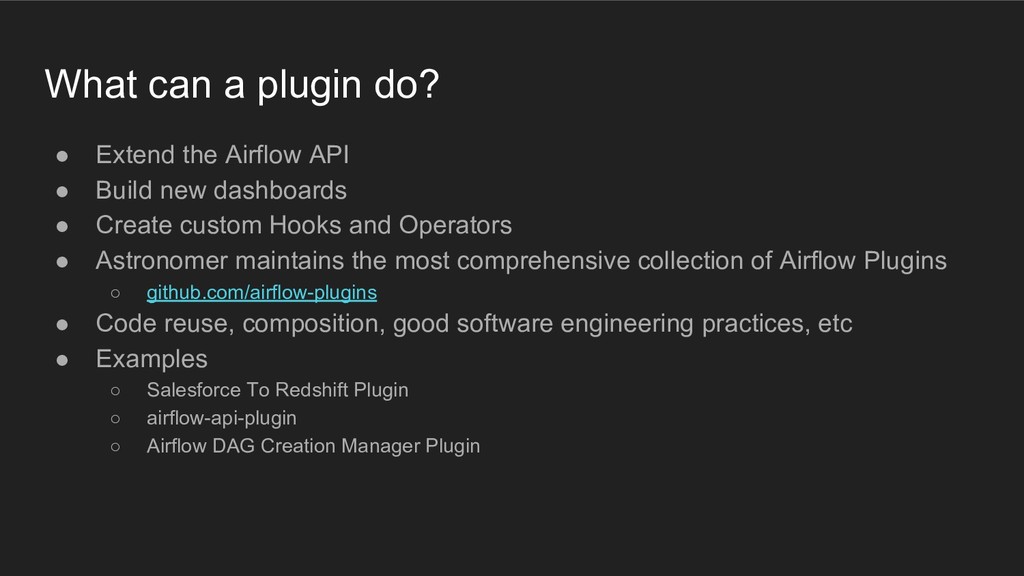

The first step to calling the Airflow REST API on Astronomer is to create a Deployment-level Service Account, which will assume a user role and set of permissions and output an API key that you can use to authenticate with your request. Step 1: Create a service account on Astronomer To get started, you need a service account on Astronomer to authenticate. To externally trigger DAG runs without needing to access your Airflow Deployment directly, for example, you can make an HTTP request in Python or cURL to the corresponding endpoint in the Airflow REST API that calls for that exact action. For users looking to automate actions around those workflows, Airflow exposes a stable REST API in Airflow 2 and an experimental REST API for users running Airflow 1.10. Apache Airflow is an extensible orchestration tool that offers multiple ways to define and orchestrate data workflows.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed